Post developed by Catherine Allen-West in coordination with Josh Pasek

ICYMI (In Case You Missed It), the following work was presented at the 2016 Annual Meeting of the American Political Science Association (APSA). The presentation, titled “Motivated Reasoning and the Sources of Scientific Illiteracy” was a part of the session “Knowledge and Ideology in Environmental Politics” on Friday, September 2, 2016.

At APSA 2016, Josh Pasek, Assistant Professor of Communication Studies and Faculty Associate at the Center For Political Studies presented work that delves into the reasons that people do not believe in prevailing scientific consensus.

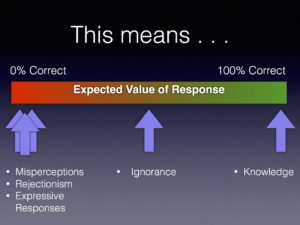

He argues that widespread scientific illiteracy in the general population is not simply a function of ignorance. In fact, there are several reasons why an individual may answer a question about science or a scientific topic incorrectly.

- They are ignorant of the correct answer

- They have misperceptions about the science

- They know what scientists say and disagree (rejectionism)

- They are trying to express some identity that they hold in their response

The typical approach to measuring knowledge involves asking individuals multiple-choice questions where they are presumed to know something when they answer the questions correctly and to lack information when they either answer the questions incorrectly or say that they don’t know.

Pasek suggests that this current model for measuring scientific knowledge is flawed, because individuals who have misperceptions can appear less knowledgeable than those who are ignorant. So he and his co-author Sedona Chinn, also from the University of Michigan, set out with a new approach to disentangle these cognitive states (knowledge, misperception, rejectionism and ignorance) and then determine which sorts of individuals fall into each of the camps.

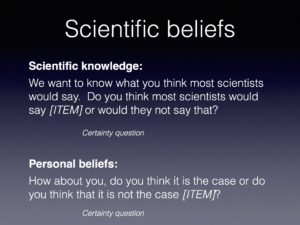

Instead of posing multiple-choice questions, the researchers asked the participants what most scientists would say about a certain scientific topic (like, climate change or evolution) and then examined how those answers compared to the respondent’s personal beliefs.

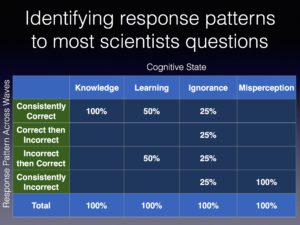

Across two waves of data collection, respondent answers about scientific consensus could fall into four patterns. They could be consistently correct, change from correct to incorrect, change from incorrect to correct or be consistently correct.

This set of cognitive states lends itself to a set of equations producing each pattern of responses:

Consistently Correct = Knowledge + .5 x Learning + .25 x Ignorance

Correct then Incorrect = .25 x Ignorance

Incorrect -> Correct =.5 x Learning + .25 x Ignorance

Consistently Incorrect = Misperception + .25 x Ignorance

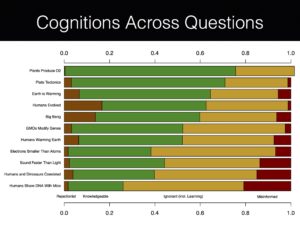

The researchers then reverse-engineered this estimation strategy for a survey aimed at measuring knowledge on various scientific topics. This yielded the following sort of translations:

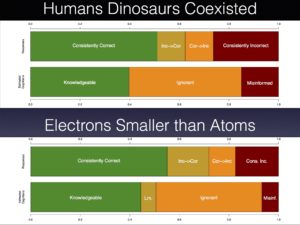

In addition to classifying respondents as knowledgeable, ignorant, or misinformed, Pasek was especially interested in identifying a fourth category: rejectionist. These are individuals who assert that they know the scientific consensus but fail to hold corresponding personal beliefs. Significant rejectionism was apparent for most of the scientific knowledge items, but was particularly prevalent for questions about the big bang, whether humans evolved, and climate change.

Rejectionism surrounding these controversial scientific topics is closely linked to religious and political motivations. Pasek’s novel strategy of parsing out rejectionism from ignorance and knowledge provides evidence that religious individuals are not simply ignorant about the scientific consensus on evolution or that partisans are unaware of climate change research. Instead, respondents appear to have either systematically wrong beliefs about the state of the science or seem liberated to diverge in their views from a known scientific consensus.

Pasek’s results show a much more nuanced, yet at times predictable, relationship between scientific knowledge and belief in scientific consensus.