Oct 31, 2014 | Elections, National

Post developed by Katie Brown and Josh Pasek.

Photo credit: ThinkStock

With each election cycle, the news media publicize day-to-day opinion polls, hoping to scoop election results. But surveys like these are blunt instruments. Or so says Center for Political Studies (CPS) Faculty Associate and Communication Studies Assistant Professor Josh Pasek.

Pasek pinpoints three main issues with current measures of vote choice. First, they do not account for day-to-day changes. Second, they capture the present moment as opposed to election day. Finally, they can be misleading due to sampling error or question wording.

Given these problems, Pasek searched for the most accurate way to combine surveys in order to predict elections. The results will be published in a forthcoming paper in Public Opinion Quarterly. Here, we highlight his main findings. Pasek breaks down three main strategies for pooling surveys: aggregation, prediction, and hybrid models.

Aggregation – what news companies call the “poll of polls” – combines the results of many polls. In this approach, there is choice in which surveys to include and how to combine results. While aggregating creates more stable results by spreading across surveys, an aggregation is a much better measure of what is happening at the moment than what will happen on election day.

Prediction takes the results of previous elections, current polls, and other variables to extrapolate to election day. The upside of prediction is its focus on election day as opposed to the present and the ability to incorporate information beyond polls. But, because the models are designed to test political theories, they typically use only a few variables. This means that their predictive power may be limited and depends on the availability of good data from past elections.

Hybrid approaches utilize some combination of polls, historical performance, betting markets, and expert ratings to build complex models of elections. Nate Silver’s FiveThirtyEight – which won accolades for accurately predicting the 2012 election – takes a hybrid approach. Because these approaches pull from so many sources of information, they tend to be more accurate. Yet the models are quite complex, making them difficult for most readers to understand.

So which pooling approaches should you look at? That depends on what you want to know. Pasek concludes, “If you want a picture of what’s happening, look at an aggregation; if you want to know what’s going to happen on election day, your best bet is a hybrid model; and if you want to know how well we understand elections, compare the prediction models with the actual results.”

Oct 28, 2014 | Foreign Affairs, Innovative Methodology, International

Post developed by Katie Brown in coordination with Khalil Shikaki.

Can measurement promote democratization in the Arab world? Khalil Shikaki, visiting scholar at the Center for Political Studies (CPS), believes the answer is “yes.” In 2006, he set out to create an instrument to measure both the direction and sustainability of the transitional process. The resulting Arab Democracy Index is a joint project between the Palestinian Center for Policy and Survey Research (PCPSR), the Arab Reform Initiative, and the Arab Barometer.

The Arab Democracy Index is unique in that it comes from within the Arab world to reflect local experiences. It also pulls from three main sources: data on government actions, reviews of legal and constitutional text, and public opinion data. Local teams in nine to twelve countries collect this data, tailoring the standardized process based on their local expertise. Together, these sources offer insight into 40 indicators of democracy.

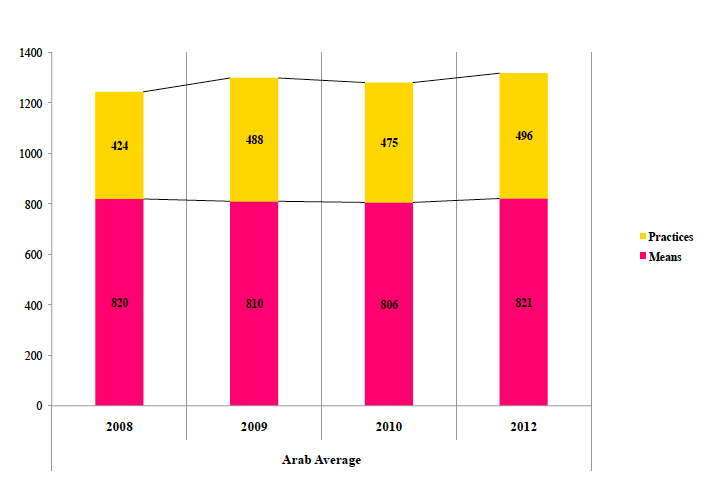

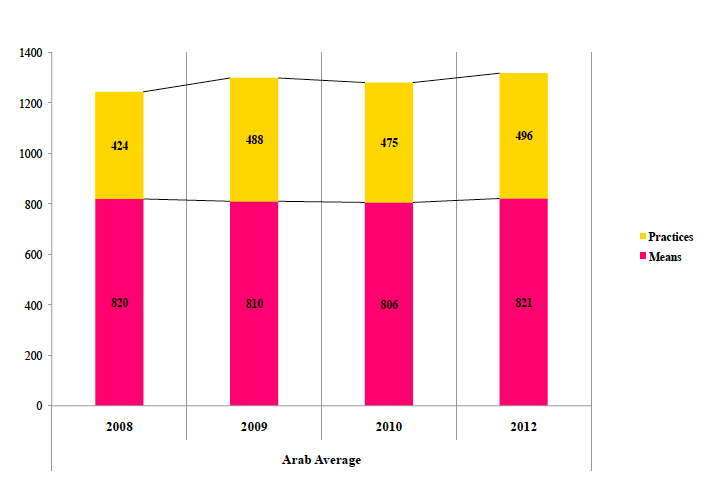

The Arab Democracy Index indicators break down into two types. First, there are Means, e.g., legislation, which speak to democracy de jure. Second, there are Practices, e.g., elections, which speak to democracy de facto. Data in each category tally to a total. Scores below 400 constitute an undemocratic state, 400 to 699 indicate early signs of transition, 700 to 1,000 highlight visible progress toward democracy, and scores above 1,000 pertain to already democratic nations. The graph below displays the results for all Arab countries. Overall, Means remain relatively constant while Practices show signs of improvement.

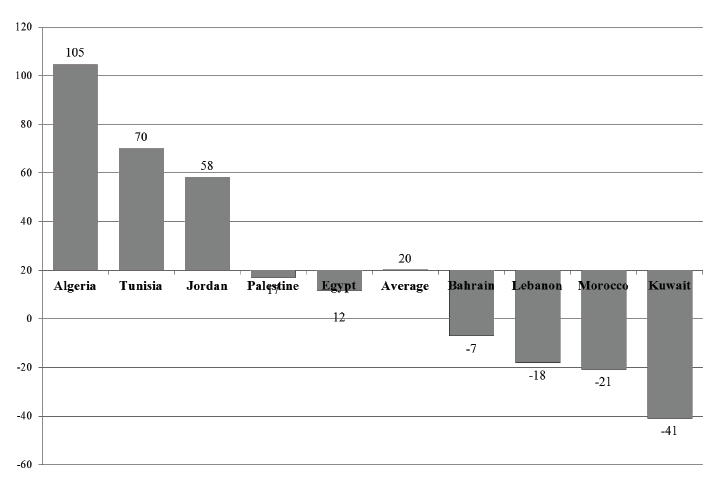

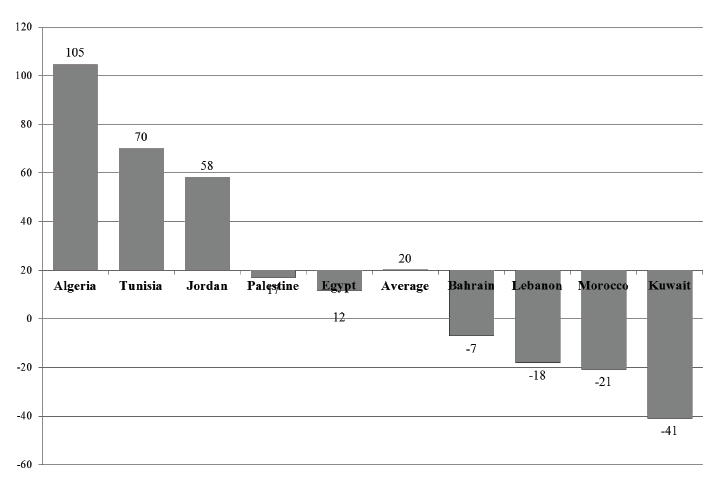

The data can also be broken down by country, as in the graph below. As we can see, some nations are driving this positive trend while others are moving away from democracy during this period.

The results led to reports, issued in 2008, 2009, and 2010, that not only document opinion but offer policy recommendations for policy change tailored to each country. Shikaki notes that while political and civil rights have improved, more must be done. Specifically, he recommends a focus on reforming education, social justice, and socio-economic reforms. The Arab Democracy Index also underscores an important larger point: external pressure from the U.S. Department of State can help change democracy in theory, but change in practice must come from within.

Oct 21, 2014 | Conflict, Innovative Methodology, International

Post developed by Katie Brown and Christian Davenport.

Who did what to whom in 1994 Rwanda? This is the central question driving the GenoDynamics project directed by Center for Political Studies Faculty Associate and Professor of Political Science, Christian Davenport, and former CPS affiliate and current Dean of the Frank Batten School of Leadership and Public Policy at the University of Virginia, Allan Stam.

Who did what to whom in 1994 Rwanda? This is the central question driving the GenoDynamics project directed by Center for Political Studies Faculty Associate and Professor of Political Science, Christian Davenport, and former CPS affiliate and current Dean of the Frank Batten School of Leadership and Public Policy at the University of Virginia, Allan Stam.

Last week, Davenport updated the associated website to provide new, unreleased data that the project collected, documents that can no longer be found on the topic, and new visualizations and animations of collected data.

Also added to the website was a page specifically dedicated to a recent BBC documentary, Rwanda’s Untold Story, that features the research. The documentary premiered on October 1, 2014 in Europe and is now available for viewing on the Internet. The film itself has prompted some controversy. The most vocal critics call those involved with the documentary “genocide deniers,” which by Rwanda law classifies as anyone who completely denies or seeks to “trivialize” or reduce the number of Tutsi victims declared by the government. Others have protested outside the BBC headquarters in London. Still others have praised the film for bringing forward a story that they felt was long overdue.

The GenoDynamics website features all of this criticism. But it also offers a glimpse into what the researchers found and how they found it.

Davenport and Stam knew that Rwanda 1994 was a time of wide-spread violence when they began investigating in depth in 2000. But they did not know “who was engaged in what activity at what time and at what place.” With funding from the National Science Foundation (NSF), the researchers content analyzed and compiled data from the Rwandan government, the International Criminal Tribunal on Rwanda (ICTR), Human Rights Watch, African Rights, and Ibuka. These sources were used to create a Bayes estimation of the number of people killed in each commune of the country for the 100 days of the genocide, civil war, reprisal killings and random violence. Davenport and Stam interviewed victims and survivors as well as perpetrators in Rwanda, and they surveyed citizens in the town of Butare. Finally, through a triangulation of information from the Central Intelligence Agency (CIA), a Canadian Military Satellite image, and Hutu and Tutsi military informants through the ICTR, they created variables concerning troop movement and zone of control. This allowed them to see who was responsible for killings in the different locations.

The work is controversial in many respects – including the degree of transparency involved, as Davenport and Stam are the only project that has made all relevant data publicly available – but the biggest controversy concerns how they challenge popular understanding. At present, the official story is that one million people were killed by the extremist Hutu government and the militias associated with them, with most of (and in some stories all of) the victims being Tutsi (upwards of 800,000 in some estimations). But Davenport and Stam found that in 1991 (according to the Rwandan census as well as from population projections back from the 1950s) only 500,000 Tutsis lived in Rwanda. Davenport and Stam further concluded that approximately 200,000 Tutsis were killed, as it was reported by a survivors organization that 300,000 Tutsi survived. While this number is less than the official number, it still represents the partial annihilation of the Tutsi population, which includes genocide but likely other crimes against humanity and human rights violations as well. But the estimation also changes the official story: the results of this research suggest that the majority of those killed in 1994 were in fact Hutu.

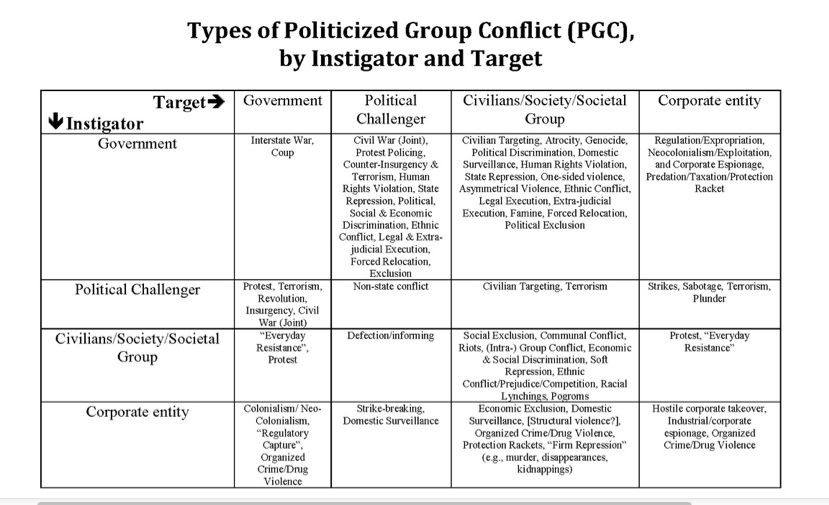

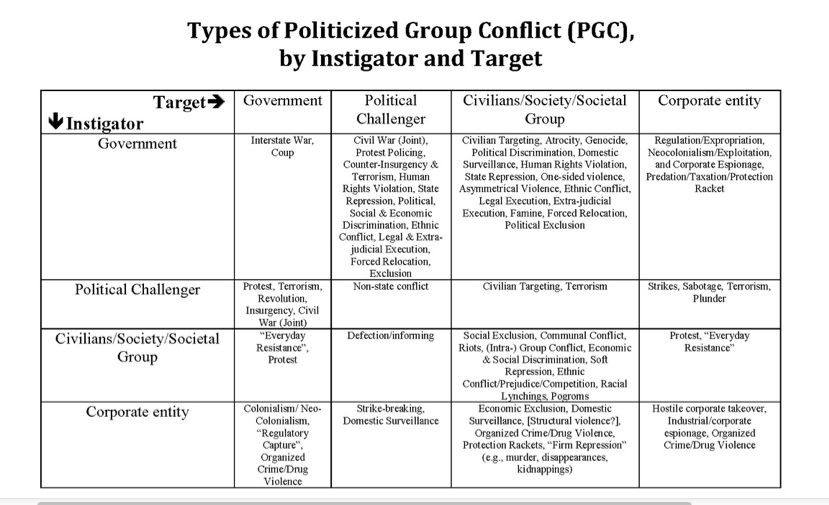

After 14 years of research, Davenport and Stam believe that there were several types of political violence occurring in Rwanda in 1994. The table below summarizes the different types of violence that were potentially involved (by perpetrator and victim). The larger project is trying to sort Rwandan political violence into each cell, which is incredibly difficult but useful for understanding exactly what happened.

The current controversy is not a new one. When Davenport and Stam presented their findings to the Rwandan government, they were told that they would not be welcome to return. When they presented their findings at the 10th and 15th anniversaries, they received more criticism. At no point was any new evidence or data provided which countered their narrative. In addition to the documentary, Davenport and Stam are working on a peer reviewed journal article and a book for a broader audience.

Oct 17, 2014 | Elections, International, Uncategorized

Post developed by David Howell and André Blais.

The Comparative Study of Electoral Systems (CSES) project recently celebrated its 20th anniversary during a Plenary Session of collaborators in Berlin, Germany, with thanks to the Wissenschaftszentrum Berlin für Sozialforschung (WZB) for their generous organizational and financial support of the event.

At the event, 31 election studies from around the world made presentations about their research designs, plans, challenges, and data availability, with most of their slide sets being available for viewing at the Plenary Session website.

It was especially appropriate to return to Berlin this year, the city having hosted the initial planning meeting for the project 20 years previously. The original event was sponsored by the International Committee for Research into Elections and Representative Democracy (ICORE). Originally anticipated as an outgrowth of ICORE, the CSES grew and eventually replaced it.

A CSES module consists of a 10-15 minute questionnaire that is inserted into post-election surveys from around the world. The project includes collaborators from more than 60 countries, with 50 election studies from 41 countries having appeared in the CSES Module 3 dataset. The CSES design is such that each module has a different theme which is intended to address a new “big question” in science. The project combines all of these surveys for each module, along with macro data about each country’s electoral system and context, into a single dataset for comparative analysis. There is no cost to download the data, and there is no embargo or preferential access. Every citizen in the world is able to download the data from the project’s website at the very same time as any of the project’s collaborators.

The recent Berlin meeting involved the first public presentation and discussion of content proposals submitted for CSES Module 5, for which data collection will be conducted during 2016-2021. After a public call, 20 proposals in total were received – a record number. Interested persons can view a presentation about the proposals, on topics ranging from corruption to populism to personality traits to electoral integrity. The theme for Module 5 will be selected, and the questionnaire developed and pretested, over the next one-and-a-half years. Prior modules have had as their themes: the performance of democracy, accountability and representation, political choices, and distributional politics and social protection.

As of the Berlin meeting in 2014, André Blais and the CSES Module 4 Planning Committee have handed over their role to a new CSES Module 5 Planning Committee, with John Aldrich as chair. John is an outstanding scholar who, in addition to having held leadership positions in many professional associations, has a long association with both the American National Election Studies and CSES.

While at its core CSES is a data gathering and disseminating organization, it has produced many other benefits as well. CSES considers an important part of its mission to be to create a community for electoral researchers, and to encourage election studies and local research capacity building around the world. CSES enables scholarship not just in political science, but other related disciplines – over 700 entries now appear in the CSES Bibliography on the project website.

The majority of funds for the CSES project are provided by the many organizations that fund the participating post-election studies. The central operations of the project are supported by the American National Science Foundation and the GESIS – Leibniz Institute for the Social Sciences.

We’d like to thank our many collaborators, funding organizations, and users for their support of the CSES, and we look forward to developing an engaging CSES Module 5!

Oct 14, 2014 | Current Events, Elections, National

Post developed by Katie Brown and Josh Pasek.

The Birther movement contends that Barack Obama was not born in the United States. Even after releasing Obama’s short form and long form birth certificates to the public, which should have settled the matter, the rumors to the contrary continued. Some contend Obama was born in Kenya. Others argue he forfeited American citizenship while living in Indonesia as a child.

What drives these beliefs?

Obama’s short form birth certificate, courtesy of whitehouse.gov

Center for Political Studies (CPS) Faculty Associate and Communication Studies Assistant Professor of Josh Pasek – along with Tobias Stark, Jon Krosnick, and Trevor Tompson – investigated the issue.

The researchers analyzed data from a survey conducted by the Associated Press, GfK, Stanford University, and the University of Michigan. The survey asked participants where they believed Obama was born. The survey also asked about political ideology, party identification, approval of the President’s job, and attitudes toward Blacks.

21.7% of White Americans did not think Obama was born in the U.S.; their answers included “not in the U.S.,” “Thailand,” “the bush,” and, most frequently, “Kenya.”

Further analyses revealed that Republicans and conservatives were more likely to believe Obama was born abroad. Likewise, negative attitudes toward blacks also correlated with Birther endorsement. Importantly, disapproval of Obama mediated the connection between both ideology and racism on the one hand and Birther beliefs on the other.

The authors conclude that, “Individuals most motivated to disapprove of the president – due to partisanship, liberal/conservative self-identification, and attitudes toward Blacks – were the most likely to hold beliefs that he was not born in the United States.” Put simply, the key feature of Birthers wasn’t that they were Republicans or that they held anti-Black attitudes, but that they disapproved of the president. It was this disapproval that was most closely associated with the willingness to believe that President Obama was ineligible for his office.

The full Electoral Studies article can be found here.